Relentless Vibe Coding (Part 1)

Vibes aren't enough. Neither is 100% test coverage.

You tell Cursor to add user authentication, and sixty seconds later you have JWT tokens, password hashing, and session management with forgot-password functionality you didn't even ask for. Everything works perfectly until you check your existing features and discover that users can't access their data, the sidebar has vanished, API calls return 401s, and your PostgreSQL migrations have new column names because the AI decided your schema needed "improvement."

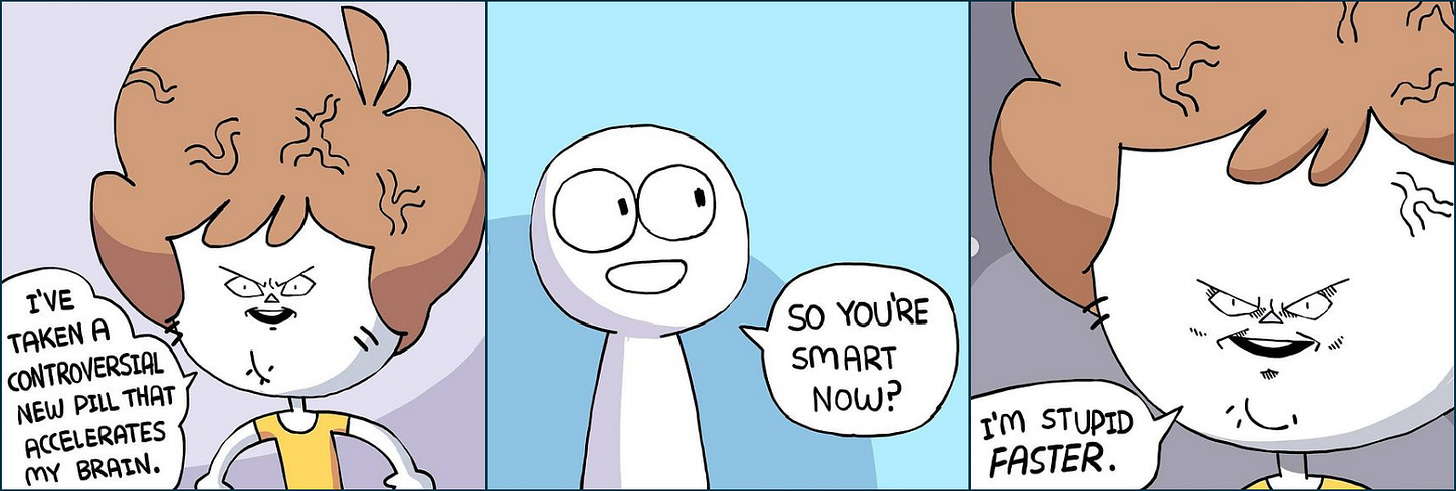

This happens all the time - one feature added, five features broken because the LLM writes code that looks perfect but subtly breaks the environment around it. The standard response is to babysit the AI, pointing out the obviously broken parts, creating plans, sub-agents, retrying, or just plain going in and fixing it ourselves…but that defeats the entire purpose of vibe coding.

LLMs don't get tired or frustrated, they don't have meetings or performance reviews, so when they hit an error, they simply try again. We need to lean into this by creating an environment so strict that the LLM literally can't mess up, going far beyond 100% test coverage to include property-based tests that try thousands of edge cases, mutation testing that verifies your tests actually catch bugs, linters cranked to maximum strictness, and type checking that would make a Haskell programmer cry.

Humans would be miserable in this environment, but LLMs thrive in it because every failure comes with clear, deterministic feedback. Reinforcement Learning, the process of learning an optimal process within an environment, has given us superhuman AI in chess, go, and lots of other games. It's been used in robotics and even by Google to improve the efficiency at which they cool their datacenters. When AI can get feedback from its environment to incorporate into future decisions, it tends to get really, really good.

Yet despite that, I've seen fairly little discussion about taking this approach to code (I'm talking the application of the output tokens of a model, not training the model). The AI obsessed among us have figured out pieces of this, but we're still babysitting the models, fixing hallucinations and regressions. We should only have to worry about what needs to happen, not how it happens.

The solution is to create a development environment so deterministic that the LLM gets immediate, unambiguous feedback on every change it makes. Not just "the tests pass" but "every possible way this code could break has been checked and verified by deterministic software." Here's what that looks like in practice:

First, make bugs extremely difficult to hide. Start with 100% test coverage. Add property-based tests that try thousands of edge cases you'd never think to write manually. Layer on mutation testing to verify your tests actually catch bugs.

Second, enforce brutal consistency. Crank your linter to maximum strictness. Enforce all type hints. Set complexity thresholds that force simple, readable code. Remove all dead code automatically.

Third, make the environment itself bulletproof. Enforce reproducible builds, profile memory usage, test against real services with TestContainers, monitor CI post-push for failures. The goal is zero surprises in production.

Normally, the process of adding a feature via LLM looks a little something like this:

1. Co-plan the feature with an LLM

2. Tell LLM to build it or do a little back and forth to steer it towards the feature

3. After some messing around, the LLM implements the feature and breaks five moreThis is where people usually stop, decide the result isn't any good, and either reset, try to power through, or give up and implement it manually. Let's see what happens if we keep going though with our more rigorous approach...

4. LLM tries to commit feature, pre-commit runs

5. pre-commit runs a litany of tests, linters, type checkers, and everything in between (all of this could run in CI instead, but pre-commit gives faster feedback)

6. LLM sees that it screwed up a ton of other features, begins co-planning again with (or without) you

7. LLM scurries around the codebase fixing all the things that it broke to maintain feature consistency. This can include both tests that it needs to write/fix and code that it needs to fix.

8. GOTO 4The problem is that normally, LLMs have no way of knowing the behavioral consistency of your app. Yes it can see that function_1 calls function_2, but it only knows that in a probabilistic sense. It’s loaded into its “brain” via the weights of its internal matrices, not verified against reality. This rigorous style of coding fixes that.

Setting it up

This feels like the sort of thing that would be better with examples, so let’s walk through what a system like this would look like. The final repository will be linked at the end as a starting point for future projects. We’ll start with a new project using uv init:

quinten@quinten:~/Documents/relentless-vibe-coding (master)$ ll

total 28

drwxrwxr-x 3 quinten quinten 4096 Jul 19 14:56 ./

drwxr-xr-x 13 quinten quinten 4096 Jul 19 14:56 ../

drwxrwxr-x 8 quinten quinten 4096 Jul 19 15:01 .git/

-rw-rw-r-- 1 quinten quinten 109 Jul 19 14:56 .gitignore

-rw-rw-r-- 1 quinten quinten 100 Jul 19 14:56 main.py

-rw-rw-r-- 1 quinten quinten 168 Jul 19 14:56 pyproject.toml

-rw-rw-r-- 1 quinten quinten 5 Jul 19 14:56 .python-version

-rw-rw-r-- 1 quinten quinten 0 Jul 19 14:56 README.md And inside of main.py we will create a super simple function:

def add(x, y):

return x + yThe first step is to set up pre-commits so that we can have all out tests run automatically as we progress. It’s pretty straightforward. Run this to install pre-commit in a virtual env:

uv add --dev pre-commitCreate .pre-commit-config.yaml at the root of your repo and add this:

repos: []Install the hooks and commit:

pre-commit install

git add .

git commit -m "Add pre-commit framework"Obviously nothing has happened yet since we don’t have any hooks, but we will soon rectify that. Our first battery of tests is to make sure we have 100% test coverage.

100% Code Coverage

First we need to add pytest and coverage as dev dependencies, then create our testing directory/files:

uv add --dev pytest coverage[toml]

mkdir tests

touch tests/__init__.py

tough tests/test_main.pyFrom there we’ll create a test:

from main import add

def test_add():

assert add(1, 2) == 3

assert add(0, 0) == 0

assert add(-1, 1) == 0

assert add(1.5, 2.5) == 4.0Add some configuration to our .pre-commit-config.yaml file:

repos:

- repo: local

hooks:

- id: pytest-coverage

name: pytest with coverage

entry: sh -c "coverage run -m pytest && coverage report --sort=cover"

language: system

pass_filenames: falseAdd some configuration to our pyproject.toml:

[tool.coverage.run]

source = ["."]

omit = ["tests/*", ".venv/*", "*/__init__.py"]

[tool.coverage.report]

show_missing = true

fail_under = 100Now when we run our pre-commits we get this:

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ pre-commit run --all-files

pytest with coverage.....................................................PassedWe can test that our coverage is working by adding another function in main.py:

def add(x, y):

return x + y

def subtract(x, y):

return x - yRunning pre-commit run --all-files again:

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ pre-commit run --all-files

pytest with coverage.....................................................Failed

- hook id: pytest-coverage

- exit code: 2

============================= test session starts ==============================

platform linux -- Python 3.12.3, pytest-8.4.1, pluggy-1.6.0

rootdir: /home/quinten/Documents/relentless-vibe-coding

configfile: pyproject.toml

collected 1 item

tests/test_main.py . [100%]

============================== 1 passed in 0.01s ===============================

Name Stmts Miss Cover Missing

---------------------------------------

main.py 4 1 75% 5

---------------------------------------

TOTAL 4 1 75%

Coverage failure: total of 75 is less than fail-under=100We can see that, while all our tests passed, since we haven’t tested our new subtract() function, the test failed. This is exactly what we were looking for! We’ll add some tests to address this:

from main import add, subtract

def test_add():

assert add(1, 2) == 3

assert add(0, 0) == 0

assert add(-1, 1) == 0

assert add(1.5, 2.5) == 4.0

def test_subtract():

assert subtract(1, 2) == -1

assert subtract(0, 0) == 0

assert subtract(-1, 1) == -2

assert subtract(1.5, 2.5) == -1It also will be good to add these files to our .gitignore:

# Relentless Vibe Coding

.coverage

.pytest_cache/From there we commit and move on to the next set of tests:

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ git add .

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ git commit -m "Added code coverage"

pytest with coverage.....................................................Passed

[master bbff205] Added code coverage

7 files changed, 147 insertions(+), 3 deletions(-)

create mode 100644 tests/__init__.py

create mode 100644 tests/test_main.pyProperty Tests

Think of property-based testing as a way to make any individual test more thorough. In our example code we’re just adding and subtracting numbers. But let’s say there was a special case where if the number added together was 42, the program crashes:

def add(x, y):

val = x + y

if val == 42:

raise Exception()

return x + yOur current tests don’t cover this. In a more complicated program where there is more complicated state, it can be even harder to discover. Furthermore, to my knowledge there are no automated tools in the python ecosystem to detect when we need to apply property-based tests. Compare that to coverage, which traverses every path to find code that hasn’t been tested. This is kind of frustrating for me annoys me very very much the more I look into it, as that means there is no verifiable nor automatable way to ensure all code that can have property-based tests does. If AI can't reliably test its assumptions against verified deterministic results in the real world, then they will always have a scaling problem (i.e. a point in project size where it becomes ineffective to rely on their autonomy, where they add 1 feature and create 5 bugs).

There is some hope here with SupGen by Victor Taelin, but that is still in development and is unlikely to get a python port anytime soon. Nevertheless, until such a solution for automated detection arrives, we will have to rely on a system prompt that instructs the LLM to use property-based testing where appropriate when creating tests. To get started, we’ll install hypothesis:

uv add --dev hypothesisIn our testing file, we’re going to modify our tests to be property based:

from main import add, subtract

from hypothesis import given, strategies as st

@given(st.integers(min_value=0, max_value=50), st.integers(min_value=0, max_value=50))

def test_add_commutative(x, y):

assert add(x, y) == add(y, x)

@given(st.integers(), st.integers())

def test_subtract_add_inverse(x, y):

assert subtract(add(x, y), y) == xThe given decorator tells Hypothesis to automatically generate random data of whatever types we specify. In this case, we use a strategy to define both x and y as integers. We can specify how many samples to generate in our pyproject.toml file:

[tool.hypothesis]

max_examples = 10000So now, instead of just having 2 manual test cases, we actually have 10,002 test cases. This can include things like large negative numbers, zero, and integer overflow scenarios that manual testing would miss. In this case, we limited the scope of the integers we test to make sure we hit our exception. Running tests nets us this:

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ pre-commit run --all-files

pytest with coverage.....................................................Failed

- hook id: pytest-coverage

- exit code: 1

============================= test session starts ==============================

platform linux -- Python 3.12.3, pytest-8.4.1, pluggy-1.6.0

rootdir: /home/quinten/Documents/relentless-vibe-coding

configfile: pyproject.toml

plugins: hypothesis-6.136.0

collected 2 items

tests/test_main.py F. [100%]

=================================== FAILURES ===================================

_____________________________ test_add_commutative _____________________________

@given(st.integers(min_value=0, max_value=50), st.integers(min_value=0, max_value=50))

> def test_add_commutative(x, y):

^^^

tests/test_main.py:5:

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

tests/test_main.py:6: in test_add_commutative

assert add(x, y) == add(y, x)

^^^^^^^^^

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

x = 0, y = 42

def add(x, y):

val = x + y

if val == 42:

> raise Exception()

E Exception

E Falsifying example: test_add_commutative(

E x=0,

E y=42,

E )

E Explanation:

E These lines were always and only run by failing examples:

E /home/quinten/Documents/relentless-vibe-coding/main.py:4

main.py:4: Exception

================================== Hypothesis ==================================

`git apply .hypothesis/patches/2025-07-27--9225b520.patch` to add failing examples to your code.

=========================== short test summary info ============================

FAILED tests/test_main.py::test_add_commutative - Exception

========================= 1 failed, 1 passed in 1.35s ==========================Looks like it caught our exception! Now that we know that it works, we can fix the code in our add function and commit it. Since hypothesis runs as a part of our regular test suite, we don’t need to add anything to our pre-commit file. We should also add .hypothesis/ to our .gitignore file.

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ git commit -m "Added property-based testing with hypothesis"

pytest with coverage.....................................................Passed

[master 5d0b100] Added property-based testing with hypothesis

4 files changed, 48 insertions(+)Mutation Testing

Here's the thing about code coverage though: it only tells you which lines your tests execute, not whether your tests would actually catch bugs. You could have 100% coverage with tests that are too weak to catch real problems. This is where mutation testing comes in.

Mutation testing works by intentionally breaking your code in small, systematic ways (called "mutations") and then running your tests to see if they catch the breakage. If your tests still pass when the code is broken, then your tests aren't strong enough.

For example, let's say we have our add function with this test:

def test_add():

result = add(5, 3)

assert result > 0 # Weak assertionThis test gives us 100% coverage on our add function. But watch what happens with mutation testing. The tool might change our function from:

def add(x, y):

return x + yTo:

def add(x, y):

return x * y # Mutation: changed + to *When we run our pre-commits, our tests still pass even though they shouldn’t:

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ pre-commit run --all-files

pytest with coverage.....................................................PassedOur weak test still passes because 5*3=15, which is still greater than 0. The mutation "survived," which tells us our test isn't actually verifying that addition is happening. To add mutation testing, first we’ll add the mutmut package:

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ uv add --dev mutmut

Resolved 32 packages in 388ms

Prepared 2 packages in 293ms

Installed 12 packages in 10ms

+ click==8.2.1

+ libcst==1.7.0

+ linkify-it-py==2.0.3

+ markdown-it-py==3.0.0

+ mdit-py-plugins==0.4.2

+ mdurl==0.1.2

+ mutmut==3.3.0

+ rich==14.1.0

+ setproctitle==1.3.6

+ textual==5.0.1

+ typing-extensions==4.14.1

+ uc-micro-py==1.0.3Then we’ll add a configuration section to our pyproject.toml:

# We need to add this section to prevent pytest from collecting duplicate tests from both the mutants/ and tests/ folders, which causes import conflicts otherwise

[tool.pytest.ini_options]

testpaths = ["tests"]

norecursedirs = ["mutants"]

[tool.mutmut]

paths_to_mutate = ["main.py"]

tests_dir = "tests/"

runner = "python -m pytest"

backup = falseAnd we’ll add a configuration section to our .pre-commit-config.yaml file:

- id: mutmut

name: mutation testing

entry: mutmut run

language: system

pass_filenames: falseAdd some files to our .gitignore:

.mutmut-cache/

mutants/Now we run our pre-commit and it….passes?

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ pre-commit run --all-files

pytest with coverage.....................................................Passed

mutation testing.........................................................PassedThat’s not right, the test is clearly faulty. What’s going on here? Well, 2 things.

Even though our

add(5, 3)test is weak, its weaknesses are covered by our separate, stronger property tests. To verify our failure, we need to comment out those property tests.mutmuthas an exit code of0even when it finds mutants that survived and doesn’t print them to stdout

Commenting out the tests with this in mind:

from main import add, subtract

from hypothesis import given, strategies as st

# @given(st.integers(min_value=0, max_value=50), st.integers(min_value=0, max_value=50))

# def test_add_commutative(x, y):

# assert add(x, y) == add(y, x)

#

# @given(st.integers(), st.integers())

# def test_subtract_add_inverse(x, y):

# assert subtract(add(x, y), y) == x

def test_add():

result = add(5, 3)

assert result > 0 # Weak assertionAnd modifying our pre-commit file to printout the surviving mutants:

- id: mutmut

name: mutation testing

entry: sh -c "mutmut run && if mutmut results | grep -q survived; then echo 'Surviving mutants found:'; mutmut results; exit 1; fi"

language: system

pass_filenames: falseNow when we run our pre-commit we get what we expected:

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ pre-commit run --all-files

pytest with coverage.....................................................Failed

- hook id: pytest-coverage

- exit code: 2

============================= test session starts ==============================

platform linux -- Python 3.12.3, pytest-8.4.1, pluggy-1.6.0

rootdir: /home/quinten/Documents/relentless-vibe-coding

configfile: pyproject.toml

testpaths: tests

plugins: hypothesis-6.136.0

collected 1 item

tests/test_main.py . [100%]

=============================== warnings summary ===============================

.venv/lib/python3.12/site-packages/_hypothesis_pytestplugin.py:461

/home/quinten/Documents/relentless-vibe-coding/.venv/lib/python3.12/site-packages/_hypothesis_pytestplugin.py:461: UserWarning: Skipping collection of '.hypothesis' directory - this usually means you've explicitly set the `norecursedirs` pytest config option, replacing rather than extending the default ignores.

warnings.warn(

-- Docs: https://docs.pytest.org/en/stable/how-to/capture-warnings.html

========================= 1 passed, 1 warning in 0.15s =========================

Name Stmts Miss Cover Missing

---------------------------------------

main.py 4 1 75% 5

---------------------------------------

TOTAL 4 1 75%

Coverage failure: total of 75 is less than fail-under=100

mutation testing.........................................................Failed

- hook id: mutmut

- exit code: 1

⠦ Generating mutants

done in 21ms

⠙ Listing all tests

⠦ Running clean tests

done

⠋ Running forced fail test

done

Running mutation testing

⠸ 2/2 🎉 0 🫥 1 ⏰ 0 🤔 0 🙁 1 🔇 0

40.40 mutations/second

Surviving mutants found:

main.x_add__mutmut_1: survived

main.x_subtract__mutmut_1: no testsGreat! Now that we know it’s working, we can remove the faulty test, uncomment our property tests, install our new pre-commit, and commit our code:

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ pre-commit install

pre-commit installed at .git/hooks/pre-commit

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ git commit -m "Added mutation testing to pre-commit"

pytest with coverage.....................................................Passed

mutation testing.........................................................Passed

[master cddff04] Added mutation testing to pre-commit

5 files changed, 241 insertions(+), 30 deletions(-)Linting

We’ll be using ruff for linting. Install it with this:

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ uv add --dev ruff

Resolved 33 packages in 723ms

Prepared 1 package in 2.09s

Installed 1 package in 3ms

+ ruff==0.12.5Add these rules to .pyproject.toml:

[tool.ruff]

line-length = 100

target-version = "py312"

[tool.ruff.lint]

select = ["ALL"]

ignore = []And add the pre-commit hook:

- id: ruff

name: ruff linting and formatting

entry: sh -c "ruff check --fix --exit-non-zero-on-fix . && ruff format ."

language: system

pass_filenames: falseRunning our pre-commit hooks results in a ton of linter errors:

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ pre-commit run --all-files

pytest with coverage.....................................................Passed

mutation testing.........................................................Passed

ruff linting and formatting..............................................Failed

- hook id: ruff

- exit code: 1

warning: `incorrect-blank-line-before-class` (D203) and `no-blank-line-before-class` (D211) are incompatible. Ignoring `incorrect-blank-line-before-class`.

warning: `multi-line-summary-first-line` (D212) and `multi-line-summary-second-line` (D213) are incompatible. Ignoring `multi-line-summary-second-line`.

main.py:1:1: D100 Missing docstring in public module

main.py:1:5: ANN201 Missing return type annotation for public function `add`

|

1 | def add(x, y):

| ^^^ ANN201

2 | return x + y

|

= help: Add return type annotation

main.py:1:5: D103 Missing docstring in public function

|

1 | def add(x, y):

| ^^^ D103

2 | return x + y

|

main.py:1:9: ANN001 Missing type annotation for function argument `x`

|

1 | def add(x, y):

| ^ ANN001

2 | return x + y

|

main.py:1:12: ANN001 Missing type annotation for function argument `y`

|

1 | def add(x, y):

| ^ ANN001

2 | return x + y

|

main.py:4:5: ANN201 Missing return type annotation for public function `subtract`

|

2 | return x + y

3 |

4 | def subtract(x, y):

| ^^^^^^^^ ANN201

5 | return x - y

|

= help: Add return type annotation

main.py:4:5: D103 Missing docstring in public function

|

2 | return x + y

3 |

4 | def subtract(x, y):

| ^^^^^^^^ D103

5 | return x - y

|

main.py:4:14: ANN001 Missing type annotation for function argument `x`

|

2 | return x + y

3 |

4 | def subtract(x, y):

| ^ ANN001

5 | return x - y

|

main.py:4:17: ANN001 Missing type annotation for function argument `y`

|

2 | return x + y

3 |

4 | def subtract(x, y):

| ^ ANN001

5 | return x - y

|

tests/__init__.py:1:1: D104 Missing docstring in public package

tests/test_main.py:1:1: D100 Missing docstring in public module

tests/test_main.py:8:5: ANN201 Missing return type annotation for public function `test_add_commutative`

|

7 | @given(st.integers(min_value=0, max_value=50), st.integers(min_value=0, max_value=50))

8 | def test_add_commutative(x, y):

| ^^^^^^^^^^^^^^^^^^^^ ANN201

9 | assert add(x, y) == add(y, x)

|

= help: Add return type annotation: `None`

tests/test_main.py:8:5: D103 Missing docstring in public function

|

7 | @given(st.integers(min_value=0, max_value=50), st.integers(min_value=0, max_value=50))

8 | def test_add_commutative(x, y):

| ^^^^^^^^^^^^^^^^^^^^ D103

9 | assert add(x, y) == add(y, x)

|

tests/test_main.py:8:26: ANN001 Missing type annotation for function argument `x`

|

7 | @given(st.integers(min_value=0, max_value=50), st.integers(min_value=0, max_value=50))

8 | def test_add_commutative(x, y):

| ^ ANN001

9 | assert add(x, y) == add(y, x)

|

tests/test_main.py:8:29: ANN001 Missing type annotation for function argument `y`

|

7 | @given(st.integers(min_value=0, max_value=50), st.integers(min_value=0, max_value=50))

8 | def test_add_commutative(x, y):

| ^ ANN001

9 | assert add(x, y) == add(y, x)

|

tests/test_main.py:9:5: S101 Use of `assert` detected

|

7 | @given(st.integers(min_value=0, max_value=50), st.integers(min_value=0, max_value=50))

8 | def test_add_commutative(x, y):

9 | assert add(x, y) == add(y, x)

| ^^^^^^ S101

10 |

11 | @given(st.integers(), st.integers())

|

tests/test_main.py:12:5: ANN201 Missing return type annotation for public function `test_subtract_add_inverse`

|

11 | @given(st.integers(), st.integers())

12 | def test_subtract_add_inverse(x, y):

| ^^^^^^^^^^^^^^^^^^^^^^^^^ ANN201

13 | assert subtract(add(x, y), y) == x

|

= help: Add return type annotation: `None`

tests/test_main.py:12:5: D103 Missing docstring in public function

|

11 | @given(st.integers(), st.integers())

12 | def test_subtract_add_inverse(x, y):

| ^^^^^^^^^^^^^^^^^^^^^^^^^ D103

13 | assert subtract(add(x, y), y) == x

|

tests/test_main.py:12:31: ANN001 Missing type annotation for function argument `x`

|

11 | @given(st.integers(), st.integers())

12 | def test_subtract_add_inverse(x, y):

| ^ ANN001

13 | assert subtract(add(x, y), y) == x

|

tests/test_main.py:12:34: ANN001 Missing type annotation for function argument `y`

|

11 | @given(st.integers(), st.integers())

12 | def test_subtract_add_inverse(x, y):

| ^ ANN001

13 | assert subtract(add(x, y), y) == x

|

tests/test_main.py:13:5: S101 Use of `assert` detected

|

11 | @given(st.integers(), st.integers())

12 | def test_subtract_add_inverse(x, y):

13 | assert subtract(add(x, y), y) == x

| ^^^^^^ S101

|

Found 21 errors.

No fixes available (2 hidden fixes can be enabled with the `--unsafe-fixes` option).This is about the point where most devs would throw their hands up and say “f*ck it”, but remember we’re not building an environment for you, we’re building an environment for the LLMs. Forcing the LLMs to stay consistent at each stage makes it easier for every subsequent feature to be added, since it’s not relying on code that might not be a good starting point.

A few things about this output that I want to point out. First of all, the warnings at the top tell us that there are conflicting rules. In these cases you should just pick one to enforce. I’ll choose to ignore these:

ignore = ["D211", "D213"]Second, most of these linter warnings are either types or docstrings, and all rules can be viewed here. So while it looks like a lot, it’s really not. Here are the updated functions and tests after addressing all the errors:

"""Simple arithmetic operations."""

def add(x: int, y: int) -> int:

"""Add two integers."""

return x + y

def subtract(x: int, y: int) -> int:

"""Subtract y from x."""

return x - y"""Tests for main module arithmetic functions."""

from hypothesis import given

from hypothesis import strategies as st

from main import add, subtract

@given(st.integers(min_value=0, max_value=50), st.integers(min_value=0, max_value=50))

def test_add_commutative(x: int, y: int) -> None:

"""Test that addition is commutative."""

assert add(x, y) == add(y, x) # noqa: S101

@given(st.integers(), st.integers())

def test_subtract_add_inverse(x: int, y: int) -> None:

"""Test that subtraction is the inverse of addition."""

assert subtract(add(x, y), y) == x # noqa: S101By default the ruff linter flags assert’s in your code, but since this is the standard way of doing unit tests we can tell it to ignore this with # noqa: S101.

After that we can add .ruff_cache/ to our .gitignore and commit:

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ git status

On branch master

Your branch is ahead of 'origin/master' by 1 commit.

(use "git push" to publish your local commits)

Changes not staged for commit:

(use "git add <file>..." to update what will be committed)

(use "git restore <file>..." to discard changes in working directory)

modified: .gitignore

modified: .pre-commit-config.yaml

modified: main.py

modified: pyproject.toml

modified: tests/__init__.py

modified: tests/test_main.py

modified: uv.lock

no changes added to commit (use "git add" and/or "git commit -a")

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ git add .

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ git commit -m "Added ruff linter"

pytest with coverage.....................................................Passed

mutation testing.........................................................Passed

ruff linting and formatting..............................................Passed

[master d696549] Added ruff linter

7 files changed, 66 insertions(+), 7 deletions(-)Type Hints

Ruff is really good at telling you whether you have missing types, but if you try to pass an int to a function expecting a str, it won’t catch that. This is where mypy comes in. We need to make sure the types across our project are handled correctly. To begin, we’ll install mypy:

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ uv add --dev mypy

Resolved 36 packages in 384ms

Installed 3 packages in 15ms

+ mypy==1.17.0

+ mypy-extensions==1.1.0

+ pathspec==0.12.1After that we’ll add a ton of rules to our .pyproject.toml:

[tool.mypy]

python_version = "3.12"

strict = true

disallow_any_unimported = true

disallow_any_expr = true

disallow_any_explicit = true

disallow_any_generics = true

disallow_subclassing_any = true

extra_checks = true

strict_bytes = true

local_partial_types = true

show_error_codes = true

pretty = true

show_absolute_path = true

warn_return_any = true

warn_unused_configs = true

warn_unused_ignores = true

warn_redundant_casts = true

strict_equality = true

no_implicit_reexport = true

follow_imports = "normal"

enable_error_code = ["exhaustive-match"]

exclude = ["mutants/"]

ignore_missing_imports = false

disallow_untyped_calls = trueThen we’ll add it to our pre-commits:

- id: mypy

name: mypy type checking

entry: mypy .

language: system

pass_filenames: falseNot all libraries enforce typing as that’s not really how the Python community does things, so having rules this strict will cause issues when interacting with those libraries, as you can see here:

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ pre-commit run --all-files

pytest with coverage.....................................................Passed

mutation testing.........................................................Passed

ruff linting and formatting..............................................Passed

mypy type checking.......................................................Failed

- hook id: mypy

- exit code: 1

/home/quinten/Documents/relentless-vibe-coding/tests/test_main.py:9: error:

Expression type contains "Any" (has type

"Callable[[Callable[..., Coroutine[Any, Any, None] | None]], Callable[..., None]]")

[misc]

@given(st.integers(min_value=0, max_value=50), st.integers(min_value=0...

^~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~...

/home/quinten/Documents/relentless-vibe-coding/tests/test_main.py:10: error:

Type of decorated function contains type "Any" ("Callable[..., None]") [misc]

def test_add_commutative(x: int, y: int) -> None:

^

/home/quinten/Documents/relentless-vibe-coding/tests/test_main.py:15: error:

Expression type contains "Any" (has type

"Callable[[Callable[..., Coroutine[Any, Any, None] | None]], Callable[..., None]]")

[misc]

@given(st.integers(), st.integers())

^~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

/home/quinten/Documents/relentless-vibe-coding/tests/test_main.py:16: error:

Type of decorated function contains type "Any" ("Callable[..., None]") [misc]

def test_subtract_add_inverse(x: int, y: int) -> None:

^

Found 4 errors in 1 file (checked 3 source files)There are many ways we can approach this. We could say that any library we import that doesn’t have typing information can just be ignored completely, but that causes issues down the line when the LLMs start changing things. We could remove the rule that disallows Any from the repo, but that kind of defeats the purpose of the typing system, and you just know that some LLM down the line is going to take that opportunity rather than doing it properly. We could add inline type ignores, but once again that defeats the purpose of the typing system we’re implementing and makes the code uglier.

No, we will take the high path, the golden path, the reliable path. We are going to create stubs. mypy stubs are files that tell the type checker what types your Python functions accept and return without containing any actual code. This functions like a type-only wrapper around any library code we use. We’re going to add a couple lines to our pyproject.toml file in the [tool.mypy] section:

[tool.mypy]

...

follow_imports = "silent" # changed from "normal"

mypy_path = "stubs"In our project folder we’re going to create a folder structure like so:

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ tree stubs/

stubs/

└── hypothesis

├── __init__.pyi

├── py.typed

└── strategies

└── __init__.pyi

3 directories, 3 filesIn the stategies’ init file we are going to define the types for our integers strategy:

class SearchStrategy[T]: ...

def integers(

min_value: int | None = None,

max_value: int | None = None,

) -> SearchStrategy[int]: ...And likewise for our @given decorator in the init file in the hypothesis directory:

from collections.abc import Callable

from hypothesis.strategies import SearchStrategy

def given[T1, T2](

arg1: SearchStrategy[T1],

arg2: SearchStrategy[T2],

) -> Callable[[Callable[[T1, T2], None]], Callable[[], None]]: ...These stubs tell mypy what the defined types are for the various functions we are calling from Hypothesis. We also have an empty file, py.typed, that marks it as a typed package.

But there’s still a problem. LLMs can still just bypass stubs by littering the codebase with # ignore tags (and other such bypasses). We can catch these instances with a simple regex that informs the user that they should be creating stubs rather than these quick bypass hacks:

- id: no-type-bypasses

name: enforce no type bypasses

entry: bash -c

args: ['! (grep -rE "# type: ignore|from typing import (Any|cast)|import (Any|cast)|: Any|cast\(|# mypy:|getattr\(|setattr\(|# noqa" --include="*.py" --exclude-dir=.venv --exclude-dir=.git . | grep -v "# noqa: S101") || (echo "Type bypass attempts found! Create proper type stubs instead." && exit 1)']

language: system

pass_filenames: falseAnd with all that, when we run our pre-commits, everything passes:

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ pre-commit run --all-files

pytest with coverage.....................................................Passed

mutation testing.........................................................Passed

ruff linting and formatting..............................................Passed

enforce no type bypasses.................................................Passed

mypy type checking.......................................................PassedFinally we add mypy_cache/ to our .gitignore and commit:

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ git status

On branch master

Your branch is ahead of 'origin/master' by 2 commits.

(use "git push" to publish your local commits)

Changes to be committed:

(use "git restore --staged <file>..." to unstage)

modified: .gitignore

modified: .pre-commit-config.yaml

modified: pyproject.toml

new file: stubs/hypothesis/__init__.pyi

new file: stubs/hypothesis/py.typed

new file: stubs/hypothesis/strategies/__init__.pyi

modified: uv.lock

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ git commit -m "Added mypy and hypothesis stubs"

pytest with coverage.....................................................Passed

mutation testing.........................................................Passed

ruff linting and formatting..............................................Passed

enforce no type bypasses.................................................Passed

mypy type checking.......................................................Passed

[master 4eae66f] Added mypy and hypothesis stubs

7 files changed, 100 insertions(+)

create mode 100644 stubs/hypothesis/__init__.pyi

create mode 100644 stubs/hypothesis/py.typed

create mode 100644 stubs/hypothesis/strategies/__init__.pyiDead code

Yep. It happened again, though in a slightly different way. Vulture and deadcode exist, but the former is not reliable enough to use for LLMs and the latter looks like it’s been either abandoned or doesn’t have the features we need.

The reason why this is important is because of context rot. Let’s say you ask the LLM to create a new feature, then you immediately fill it with 195,000 tokens worth of irrelevant junk, and 5000 tokens worth of actual, relevant context. Compare that to a request to create a new feature that only has those 5000 relevant tokens. Every LLM is going to perform better on the second one since its not sifting through irrelevant information to figure out what actually matters.

Dead code causes context rot. Context rot causes the LLM to mess up more, causing more context rot, until nothing works anymore. There’s a bit of poetry in the parallels to organic life there.

Complexity Limits

Complexity is very, very bad. It creeps into your codebase over time and makes it more and more difficult to reason about. With LLMs that “over time” occurs much quicker. There needs to be automated and verifiable measures against complexity. Thankfully, a method called Cyclomatic Complexity was invented in 1976 to track that complexity and assign a number to it. As an example:

def check(x, y):

"""Minimal function with cyclomatic complexity of 5.

Complexity calculation:

Base: 1

+ 4 decision points (if statements)

= 5 total

"""

if x > 0: # +1 complexity (decision point 1)

if y > 0: # +1 complexity (decision point 2)

return 1

else:

return 2

elif x < 0: # +1 complexity (decision point 3)

return 3

elif y != 0: # +1 complexity (decision point 4)

return 4

return 5 # Base path (no decision)The way to think about cyclomatic complexity is that every point where the code branches or a decision needs to be made adds 1 to the complexity. A more exhaustive example might look like this:

def example(x, items): # Base: 1

if x > 0: # +1 (total: 2)

for item in items: # +1 (total: 3)

if item or x == 5: # +1 (total: 4) - compound condition counts as 1

while x < 10: # +1 (total: 5)

x += 1

return x # Total: 5Generally speaking if a function’s cyclomatic complexity is higher than 10 it starts to become difficult to reason about all of the branching paths your code could take. Thankfully for us, we don’t actually need to do anything to add this to our code since it’s already cover by the Ruff rule C901! If we add the following function to our main.py and run our pre-commit:

def process(a, b, c, d, e):

if a > 0:

if b > 0:

if c > 0:

if d > 0:

if e > 0:

if a + b + c + d + e > 100:

return a * b * c * d * e

else:

return a + b + c + d + e

else:

if a > b:

return d - e

else:

return e - d

else:

if c > b:

return c - d

else:

return b - c

else:

if b > a:

return b - c

else:

return a - b

else:

if a > 10:

return a - b

else:

return b - a

else:

return 0This error pops up (among many others):

main.py:14:5: C901 `process` is too complex (11 > 10)

|

14 | def process(a, b, c, d, e):

| ^^^^^^^ C901

15 | if a > 0:

16 | if b > 0:

|Unfortunately this isn’t a silver bullet. The problem with cyclomatic complexity is that it’s only an approximation for how difficult it is to understand a piece of code. If we remove one of the leaf if-else clauses, despite that fact that our complexity checks would pass, it wouldn’t make it significantly easier to reason about. Clearly we need an additional way to check complexity — enter Cognitive Complexity.

Cognitive complexity is a concept that came out of a company called Sonar in 2017. It evaluates the complexity of code at a more semantically meaningful level for humans than cyclomatic complexity, which tends to focus on limiting the branching of functions to make testing them easier. By adding this we cover 2 use cases:

Making the code easier to test

Making the code easier to understand

To check the cognitive complexity, we can install the package complexipy:

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ uv add complexipy --dev

Resolved 39 packages in 178ms

Installed 3 packages in 8ms

+ complexipy==3.3.0

+ shellingham==1.5.4

+ typer==0.16.0And now when we run it, we can see the cognitive complexity of our process function:

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ complexipy -d low --output-json main.py

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ cat complexipy.json

[

{

"complexity": 39,

"file_name": "main.py",

"function_name": "process",

"path": "main.py"

}

]Values of 15-20 are about when it starts to become harder to reason about the code, with 15 being the default setting. Lets add this to our .pre-commit-config.yaml file and run it:

- id: complexipy

name: complexity check

entry: complexipy --max-complexity-allowed 15

language: system

types: [python]

pass_filenames: true<snip>

complexity check.........................................................Failed

- hook id: complexipy

- exit code: 1

<snip>

│ main.py │ main.py │ process │ 35

🧠 Total Cognitive Complexity: 35

3 files analyzed in 0.0055 seconds

<snip>Great! The cognitive complexity checker fails when we have a function that’s difficult to reason about. Before we remove our complicated example and commit our code, I’d like to point out a leaky abstraction. Combining these two complexity checkers covers a lot more than either individually, but there are still cases where both fail to catch complexity. For example, these following blocks of code both return a cyclomatic complexity of 1. Cognitive complexity calculations put the first at 0 and the second at 1:

def simple_function():

return 42def complex_but_linear():

data = [i for i in range(10)]

transformed = [x * 2 + 3 for x in data]

filtered = [x for x in transformed if x % 2 == 0]

mapped = list(map(lambda x: x**2, filtered))

reduced = sum(mapped)

normalized = reduced / len(mapped) if mapped else 0

return normalizedNevertheless, it’s better to have both than neither at all, and since there isn’t a good way to programatically catch this kind of complexity at this time, we’re going to install our new pre-commit and commit our code:

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ pre-commit install

pre-commit installed at .git/hooks/pre-commit

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ git add .pre-commit-config.yaml pyproject.toml uv.lock

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ git commit -m "Added cognitive complexity checker"

[WARNING] Unstaged files detected.patch1754269093-700639.

pytest with coverage.....................................................Passed

mutation testing.........................................................Passed

ruff linting and formatting..............................................Passed

enforce no type bypasses.................................................Passed

mypy type checking.......................................................Passed

complexity check.....................................(no files to check)Skipped

[master 9bc4938] Added cognitive complexity checker

3 files changed, 264 insertions(+), 200 deletions(-)Security Auditing

LLMs can make mistakes. Sometimes that mistake is setting the background color of a button to green when you wanted it to be blue. Sometimes its committing all of your environment variables and secret keys directly to a public GitHub project. We’re going to do our best to prevent the latter from happening.

To start off, there are 3 different libraries we are going to use, all of which serve a different purpose:

Bandit (generic security analysis)

pip-audit (analyze potentially vulnerable dependencies)

gitleaks (more comprehensive analysis for password/environment in staged files)

We’re gonna dd these lines to our .pre-commit-config.yaml file:

<snip>

- id: bandit

name: bandit security scan

entry: sh -c "bandit -r . -s B101 -x $(cat .gitignore | grep -v '^#' | grep -v '^$' | grep -v '\*' | sed 's|/$||' | sed 's|^|./|' | paste -sd, -)"

language: system

pass_filenames: false

- id: pip-audit

name: pip vulnerability scan

entry: pip-audit

language: system

pass_filenames: false

- repo: https://github.com/gitleaks/gitleaks

rev: v8.22.1

hooks:

- id: gitleaksThe bandit command excludes the same directories that are listed in our .gitignore folder, that way they always stay in sync. It also ignores asserts in the code, since our tests will be filled with them. Gitleaks gets its own repo because it is a command line tool and not a python library. It also only scans files in staging by default, so we will need to stage some files before we can see it in effect.

To make sure these libraries are properly detecting vulnerabilites, we will need to intentionally add some in. First, we’re going to add Jinja2 v3.1.2 (and urllib3 to get this to work) to our pyproject.toml since it has some known vulnerabilities :

<snip>

dependencies = [

"jinja2==3.1.2",

"urllib3>=2.0.0",

]

<snip>Then we’re gonna add a bunch of vulnerable code to our main.py file that will hopefully be detected:

<snip>

# Security vulnerabilities to demonstrate bandit:

def insecure_random() -> int:

"""B311: Using random for security/cryptographic purposes."""

return random.randint(1, 100) # Should use secrets module

def run_command(user_input: str) -> str:

"""B602: Subprocess with shell=True - command injection risk."""

result = subprocess.run(user_input, check=False, shell=True, capture_output=True, text=True)

return result.stdout

def load_user_data(data: bytes) -> object:

"""B301: Pickle usage - arbitrary code execution risk."""

return pickle.loads(data)

def weak_hash(password: str) -> str:

"""B303: Using MD5 for password hashing - cryptographically broken."""

return hashlib.md5(password.encode()).hexdigest()

# Hardcoded secrets (caught by both bandit and gitleaks):

API_KEY = "sk-1234567890abcdef1234567890abcdef" # B105: Hardcoded password

DATABASE_PASSWORD = "super_secret_password_123" # B105: Hardcoded password

def connect_to_api() -> None:

"""Function with hardcoded credentials."""

aws_access_key = "AKIAIOSFODNN7EXAMPLE" # Gitleaks: AWS key pattern

aws_secret = "wJalrXUtnFEMI/K7MDENG/bPxRfiCYEXAMPLEKEY" # Gitleaks: AWS secret

def test_insecure_random() -> None:

"""Test insecure random function."""

result = insecure_random()

assert 1 <= result <= 100 # noqa: S101

def test_weak_hash() -> None:

"""Test weak hash function."""

result = weak_hash("password")

assert len(result) == 32 # noqa: S101

# Additional secrets for gitleaks testing

GITHUB_TOKEN = "ghp_1234567890abcdefghijklmnopqrstuvwxyz"

STRIPE_API_KEY = "sk_live_1234567890abcdefghijklmnopqrstuvwxyz"

JWT_SECRET = "my-super-secret-jwt-key-12345"

PRIVATE_KEY = """-----BEGIN RSA PRIVATE KEY-----

MIIEpAIBAAKCAQEA1234567890abcdefghijklmnopqrstuvwxyz

-----END RSA PRIVATE KEY-----"""Now, when we stage our files and try to commit…

(relentless-vibe-coding) quinten@quinten:~/Documents/relentless-vibe-coding (master)$ git commit -m "Added bandit, pip-audit, and gitleaks for security auditing"

<snip>

bandit security scan.....................................................Failed

- hook id: bandit

- exit code: 1

[main] INFO profile include tests: None

[main] INFO profile exclude tests: None

[main] INFO cli include tests: None

[main] INFO cli exclude tests: None

[main] INFO running on Python 3.12.7

Run started:2025-08-04 03:51:49.913833

Test results:

>> Issue: [B403:blacklist] Consider possible security implications associated with pickle module.

Severity: Low Confidence: High

CWE: CWE-502 (https://cwe.mitre.org/data/definitions/502.html)

More Info: https://bandit.readthedocs.io/en/1.8.6/blacklists/blacklist_imports.html#b403-import-pickle

Location: ./main.py:4:0

3 import hashlib

4 import pickle

5 import random

<snip>

>> Issue: [B101:assert_used] Use of assert detected. The enclosed code will be removed when compiling to optimised byte code.

Severity: Low Confidence: High

CWE: CWE-703 (https://cwe.mitre.org/data/definitions/703.html)

More Info: https://bandit.readthedocs.io/en/1.8.6/plugins/b101_assert_used.html

Location: ./tests/test_main.py:18:4

17 """Test that subtraction is the inverse of addition."""

18 assert subtract(add(x, y), y) == x # noqa: S101

--------------------------------------------------

Code scanned:

Total lines of code: 57

Total lines skipped (#nosec): 0

Run metrics:

Total issues (by severity):

Undefined: 0

Low: 11

Medium: 1

High: 2

Total issues (by confidence):

Undefined: 0

Low: 0

Medium: 4

High: 10

Files skipped (0):

pip vulnerability scan...................................................Failed

- hook id: pip-audit

- exit code: 1

Found 5 known vulnerabilities in 1 package

Name Version ID Fix Versions

------ ------- ------------------- ------------

jinja2 3.1.2 GHSA-h5c8-rqwp-cp95 3.1.3

jinja2 3.1.2 GHSA-h75v-3vvj-5mfj 3.1.4

jinja2 3.1.2 GHSA-q2x7-8rv6-6q7h 3.1.5

jinja2 3.1.2 GHSA-gmj6-6f8f-6699 3.1.5

jinja2 3.1.2 GHSA-cpwx-vrp4-4pq7 3.1.6

Detect hardcoded secrets.................................................Failed

- hook id: gitleaks

- exit code: 1

○

│╲

│ ○

○ ░

░ gitleaks

Finding: GITHUB_TOKEN = "REDACTED

Secret: REDACTED

RuleID: github-pat

Entropy: 5.171928

File: main.py

Line: 65

Fingerprint: main.py:github-pat:65

Finding: STRIPE_API_KEY = "REDACTED"

Secret: REDACTED

RuleID: stripe-access-token

Entropy: 5.141250

File: main.py

Line: 66

Fingerprint: main.py:stripe-access-token:66

Finding: PRIVATE_KEY = """REDACTED"""

Secret: REDACTED

RuleID: private-key

Entropy: 5.071992

File: main.py

Line: 68

Fingerprint: main.py:private-key:68

Finding: API_KEY = "REDACTED" # B105: Hardcoded ...

Secret: REDACTED

RuleID: generic-api-key

Entropy: 4.214997

File: main.py

Line: 42

Fingerprint: main.py:generic-api-key:42

10:51PM INF 1 commits scanned.

10:51PM INF scanned ~40662 bytes (40.66 KB) in 50.3ms

10:51PM WRN leaks found: 4

[INFO] Restored changes from /home/quinten/.cache/pre-commit/patch1754279501-1613084.Looks like it caught all our synethetic vulnerabilities!

Code

This article is going to end rather abruptly (apologies!), but fear not there will be a Part 2. I want to get this out so I can start getting feedback on its usefullness before I put too much time into it. I would like for this to be a retro-fit harness anyone can slap onto any codebase (ala `uvx containment-chamber init`) and it just give agents the structure they need to build within it. But I need to see if it’s useful first :)

Anyways, as promised, here is the repo: https://github.com/Mockapapella/containment-chamber

It’s in an alpha state at the moment so don’t use it for anything serious. I just really want to hear people’s take on this approach to coding as I think it’s at least directionally correct.

If you enjoyed this, please share with others who might also enjoy it :)